Container Orchestration for DevOps Engineers

Welcome to this week's issue on Container and Codes. In this newsletter, we discuss working and managing multiple containers in an application and how container orchestration save engineers headaches

Multiple Containers

In our last publication, we discussed how containers are used in application deployments to package applications and enable them to be deployed on different infrastructure environments.

Containers create an easy and standardized way of packaging and running applications however, just like many things in computing, issues may arise when dealing with containers at scale. It may be easy to manage and support a few containers but most real-world applications need to run on multiple containers. As the number of containers in your application increases so does the need for more advanced administration and management, this is where container orchestration becomes important.

Find out more about containers and how they have improved how we package and deploy applications here:

So what exactly is container orchestration?

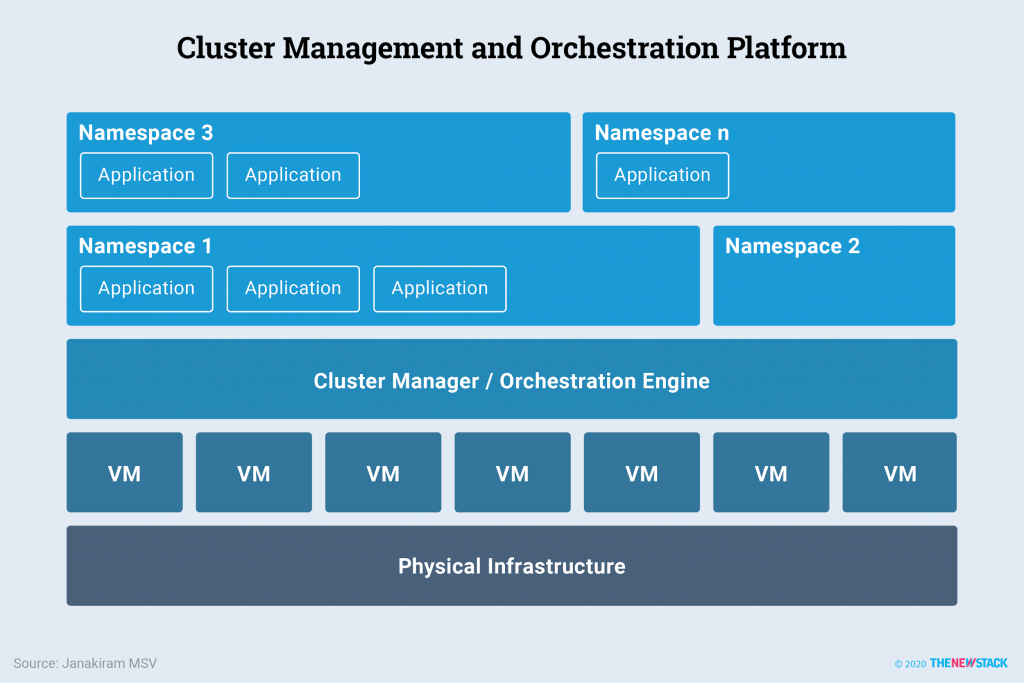

Container orchestration is the process of managing and providing administration for multiple containers within a cluster. It includes all activities involved in automatically provisioning, deploying, scaling, monitoring, and upgrading containers.

Challenges with Containers

Containers, when first introduced, changed the way applications were packaged and deployed. In the early days of containers, it was common to find products that were deployed and run on just a few containers. As containers became more popularly used in deployments and microservice architectures (where an application could be made up of many separate, loosely coupled services that usually run as containers) started being widely used in applications, certain challenges began to arise with the use of containers. These challenges can be noticed especially when multiple containers are being managed manually. Some challenges that arise with the manual management of containers include:

Scaling: When working on an application it is important to provide for the eventuality of the application growing and expanding to accommodate more users and features. In the case of containerized applications, this could mean providing more containers or increasing and reducing the resources (CPU and memory) of a container on demand. This is an instant nightmare when working manually. Scaling out, scaling in, scaling up, and scaling down containers on demand need to be automated to avoid excessive administrative overhead.

Deployment Complications: Deploying applications or application updates into containers is another frontier where engineers managing multiple containers manually would have major challenges. To handle this manually, engineers would need to know the currently running application version on all containers, the specific containers where applications should be deployed, the status of deployments on containers and so much more information. Rolling back on changes would be another major battle as well. This is a task that container orchestrators can easily automate and handle.

Troubleshooting Challenges: Troubleshooting would require proper observability and visibility into the events occurring on the applications in the containers. Increasing the number of containers increases the scope of troubleshooting and can soon reach a level where finding issues in a container would be similar to picking out a needle in a haystack

Communication Inefficiency between Containers: Networking among multiple containers would be a hassle in manual container management. Service discovery, routing, and so many other networking operations simply need automation to reduce the amount of errors when working with scale.

With effective container orchestration, it is possible to manage the large scale of containers that are needed for many of the modern applications we use today. With container orchestration, engineering teams can now take advantage of the many benefits it offers. Important activities such as load balancing, service discovery among different containers, traffic routing, sensitive data management, and monitoring among multiple containers can now be automated and done seamlessly.

In today's newsletter, we will discuss the benefits of container orchestration and how it helps organizations and engineering teams to scale effectively. We will discuss the different operations of container orchestrators and different examples of container orchestrators finally, we will take an in-depth look at one of the most popular container orchestrators today; Kubernetes.

How Does Container Orchestration Solve These Problems?

Container orchestration automates processes necessary for managing container infrastructure like deployments, scaling, scheduling, configuration, networking, and security operations, freeing engineers up to do more critical tasks. The common design in container orchestration is a controller and worker architecture (master and slaves) where the control plane node is made up of various components that manage the activities of the worker nodes by enforcing policies, collecting information, and administering commands to the workers. Some of the tasks performed by container orchestrations:

Scaling: Orchestration provides an automated way of handling container resources by automatically scaling up or down to handle increasing or decreasing requests.

Deployments: Orchestration provides an automated and efficient way to handle the complexities of deployment in a microservice application. Microservices applications usually have a complex deployment strategy which could be sequential or concurrent. Container orchestration handles these requirements efficiently.

Scheduling: A container orchestrator monitors node resources, requirements, and policies and uses this information to automate the assignment of pods of containers to specific nodes and ensure that each pod is deployed on the best possible node.

Load Balancing and Traffic Routing: Container orchestrators handle network operations which could include directing traffic to nodes and containers and handling network communication routing, DNS operations and so much more

Service Discovery: A container orchestrator provides a means for different services in containers to find and communicate with each other through service discovery.

Security: Container orchestrators implement and provide options for implementing security policies on containers and clusters. Security features such as secret management, identity management, network communication security and so much more

Health Monitoring: An orchestrator automatically monitors the resource utilization and other performance information of the containers and can easily integrate with other monitoring tools like Prometheus to provide system metrics.

Popular Container Orchestration Platforms

Container orchestration platforms provide a means to automate container management activities and processes. Most container orchestration platforms are designed with the control plane and worker node architecture where components on the control plane perform administration and the worker plane with the worker nodes hold the containers and receive and execute commands from the control plane.

A container orchestrator can either be managed or unmanaged. These two categories define how much access an engineer is given to control the control plane components.

In a managed container orchestrator, such as AWS EKS, GKE, and Azure AKS, the service provider (AWS, Google, Azure) is responsible for handling the control plane infrastructure and resources at an additional cost to the user. This takes a significant part of the operational overhead away from the team and directs their focus onto other critical responsibilities

However, the orchestrator is deployed on self-managed nodes and servers in an unmanaged or self-managed container orchestrator. This gives you complete control of the cluster and enables you to customize components to best fit your use case. It also means you would completely be in charge of the management and maintenance of the platform.

Some of the most common container orchestration tools are:

Docker Swarm

Apache Mesos

Harshicorp Nomad

Redhat Openshift

SUSE Rancher, and of course

Kubernetes

Kubernetes

Kubernetes is an open-source container orchestration tool originally developed by Google from their internal cluster management tool, Borg. Google donated Kubernetes to the open-source community through the Cloud Native Computing Foundation in 2015 and today, Kubernetes has become the de-facto container orchestration tool for most engineering teams.

Control Plane: Kubernetes at its basic level operates with a master-slave architecture. The control plane server is the master node and contains components that manage the worker nodes and the pods running on the worker nodes in the cluster

Worker Node: The worker node is the server managed by the control plane. It hosts the pods and other components that handle the application workload.

Some Control Plane Components:

1. Kube-Apiserver: This is the component of the Kubernetes control plane that exposes the Kubernetes objects by servicing REST operations and providing the front end to interact with the cluster.

2. etcd: This is a key-value store that serves as the database or datastore for all cluster data. It is designed to be highly available and should be configured to be reliable with backups, especially in a production cluster.

3. Kube Scheduler: This component decides which nodes to assign pods to. When new pods are created, they need to be deployed on a node that would be able to run their processes, kube scheduler has mechanisms for making decisions on the most appropriate node for a pod by considering several factors such as resource requirements, hardware/software/policy constraints, affinity and anti-affinity specifications, data locality, inter-workload interference, and deadlines.

4. Kube Controller Manager: This is the component that is responsible for managing and running all the controller processes on the cluster. Some controllers include node controller, job controller, ingress controller, service account controller, endpointslice controller, etc.

Some Worker Node Components include:

1. Kubelet: This is the Kubernetes agent that runs on nodes and is responsible for managing the pods and the nodes for Kubernetes.

2. Kube-proxy: Kube proxy is the network proxy that runs on each node in a cluster to ensure the implementation of network rules which would allow network communication between pods within the cluster and to destinations outside the cluster

3. Container Runtime: This is essential for the running and execution of containers on a node. A container runtime is responsible for managing containers in a system. Some popular runtimes include: containerd, cri-o

Getting Started with Kubernetes

New to Kubernetes?

That’s no issue at all. Kubernetes is an open-source project and as such has a vibrant community behind it to help you learn various topics and concepts. You can become a part of our community at KubeCounty and learn various fundamentals about Kubernetes and other useful DevOps and Cloud Engineering tools.

Kubernetes also provides excellent documentation that you can go through at any time and learn about their tools and offerings

Getting started with Kubernetes.

Would you like to learn more about DevOps Engineer or Improve your experience with various DevOps and Cloud projects?

KubeCounty offers FREE online classes, webinars, and practical projects curated to help you develop your knowledge and experience.

Fill out the form below to join our community:

For more discussions like this, follow KubeCounty on Twitter @KubeCounty and engage in our discussion.